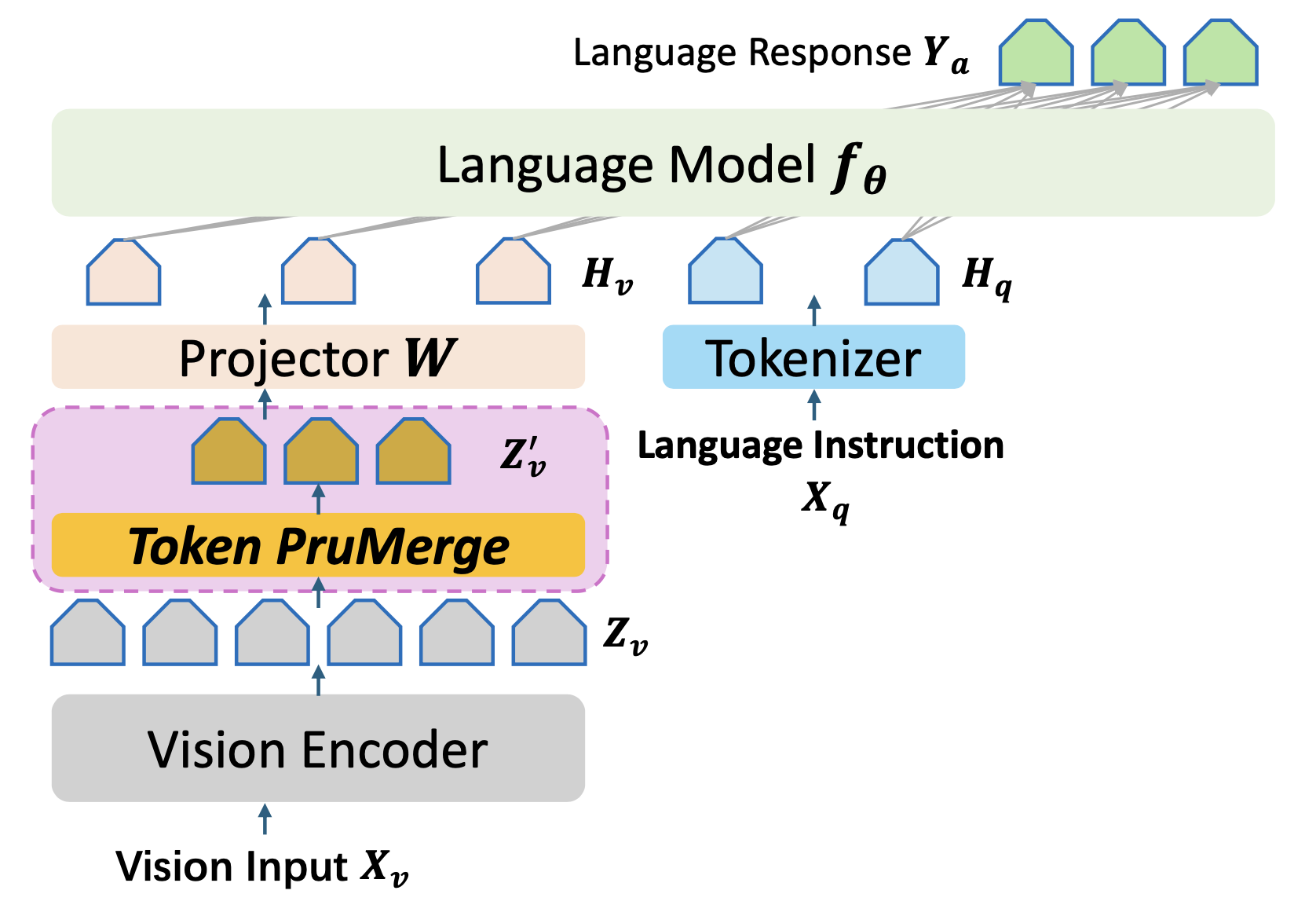

LLaVA-PruMerge: Adaptive Token Reduction for Efficient Large Multimodal Models

March 8, 2026 · View on GitHub

Accpeted to ICCV 2025

Work was arxived back to March 2024

Yuzhang Shang*, Mu Cai*, Bingxin Xu*, Yong Jae Lee^, Yan Yan^

*Equal Contribution, ^Equal Advising

[Paper] [Project Page]

How to run

Step.0: Set the environment the same as LLaVA-1.5

Note that the core of our proposed module is here in the CLIP image encoder.

Step.1 (for inference): Download Checkpoints

Download the checkpoints (LoRA Version) from Yuzhang's Huggingface Homepage to checkpoints/llava-v1.5-7b-lora-prunemerge.

Step.2 (for inference): Change the methods (PruMerge or PruMerge+).

Change the call function of token reduction from here in the CLIP image encoder.

Step.3 (for inference): Run the script.

For example, the evaluation for TextVQA is:

CUDA_VISIBLE_DEVICES=7 XDG_CACHE_HOME='/data/shangyuzhang/' bash scripts/v1_5/eval/testvqa.sh

For other inference scripts, refer to LLaVA Evaluation.

Reference

If you find our code useful for your research, please cite our paper.

@inproceedings{

shang2025prumerge,

title={LLaVA-PruMerge: Adaptive Token Reduction for Efficient Large Multimodal Models},

author={Yuzhang Shang and Mu Cai and Bingxin Xu and Yong Jae Lee and Yan Yan},

booktitle={ICCV},

year={2025}

}