mjlab

April 17, 2026 · View on GitHub

mjlab

mjlab combines Isaac Lab's manager-based API with MuJoCo Warp, a GPU-accelerated version of MuJoCo. The framework provides composable building blocks for environment design, with minimal dependencies and direct access to native MuJoCo data structures.

Getting Started

mjlab requires an NVIDIA GPU for training. macOS is supported for evaluation only.

Try it now:

Run the demo (no installation needed):

uvx --from mjlab --refresh demo

Or try in Google Colab (no local setup required).

Install from source:

git clone https://github.com/mujocolab/mjlab.git && cd mjlab

uv run demo

For alternative installation methods (PyPI, Docker), see the Installation Guide.

Training Examples

1. Velocity Tracking

Train a Unitree G1 humanoid to follow velocity commands on flat terrain:

uv run train Mjlab-Velocity-Flat-Unitree-G1 --env.scene.num-envs 4096

Multi-GPU Training: Scale to multiple GPUs using --gpu-ids:

uv run train Mjlab-Velocity-Flat-Unitree-G1 \

--gpu-ids "[0, 1]" \

--env.scene.num-envs 4096

See the Distributed Training guide for details.

Evaluate a policy while training (fetches latest checkpoint from Weights & Biases):

uv run play Mjlab-Velocity-Flat-Unitree-G1 --wandb-run-path your-org/mjlab/run-id

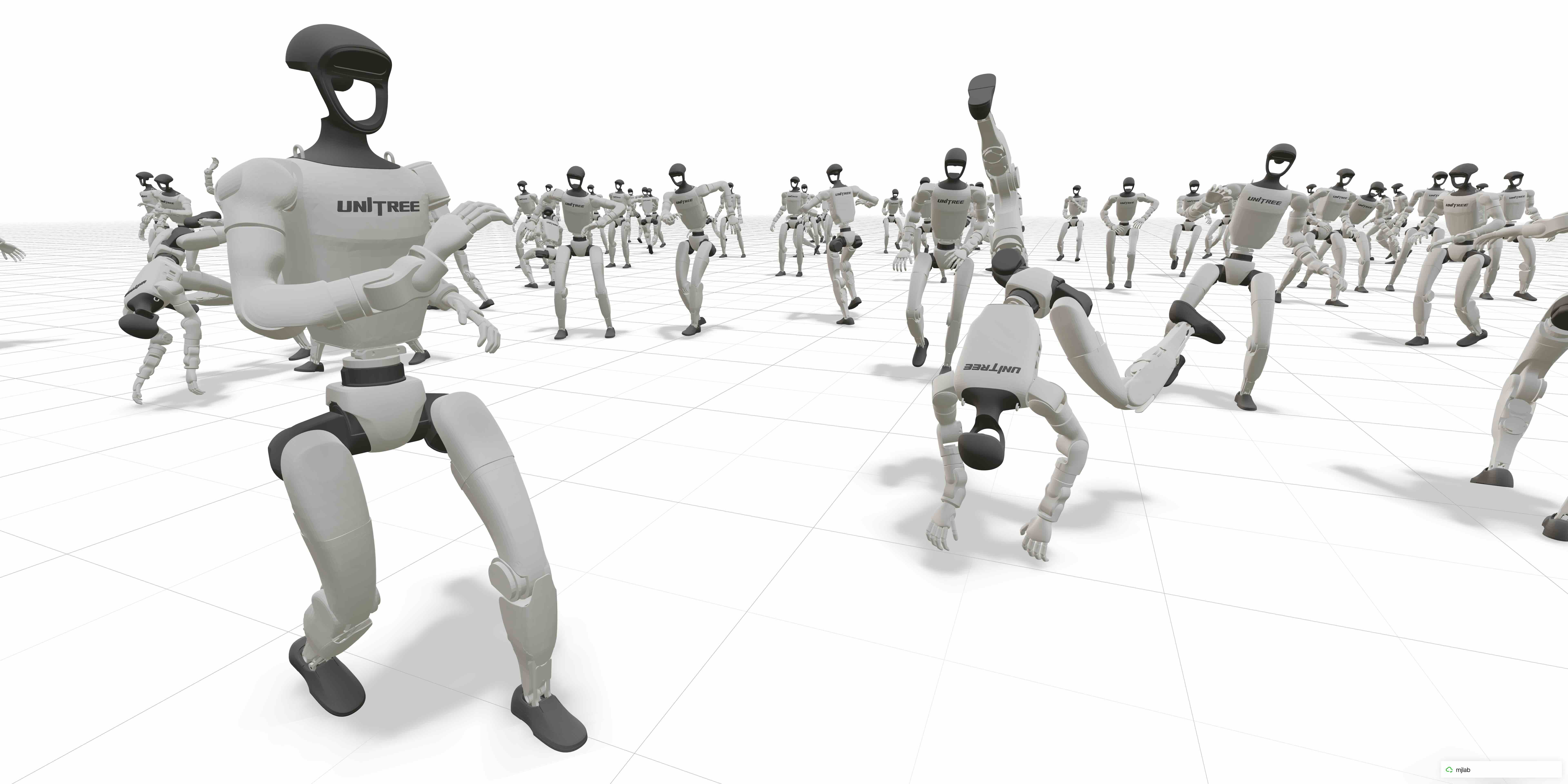

2. Motion Imitation

Train a humanoid to mimic reference motions. See the motion imitation guide for preprocessing setup.

uv run train Mjlab-Tracking-Flat-Unitree-G1 --registry-name your-org/motions/motion-name --env.scene.num-envs 4096

uv run play Mjlab-Tracking-Flat-Unitree-G1 --wandb-run-path your-org/mjlab/run-id

3. Sanity-check with Dummy Agents

Use built-in agents to sanity check your MDP before training:

uv run play Mjlab-Your-Task-Id --agent zero # Sends zero actions

uv run play Mjlab-Your-Task-Id --agent random # Sends uniform random actions

When running motion-tracking tasks, add --registry-name your-org/motions/motion-name to the command.

Documentation

Full documentation is available at mujocolab.github.io/mjlab.

Development

make test # Run all tests

make test-fast # Skip slow tests

make format # Format and lint

make docs # Build docs locally

For development setup: uvx pre-commit install

Citation

mjlab is used in published research and open-source robotics projects. See the Research page for publications and projects, or share your own in Show and Tell.

If you use mjlab in your research, please consider citing:

@misc{zakka2026mjlablightweightframeworkgpuaccelerated,

title={mjlab: A Lightweight Framework for GPU-Accelerated Robot Learning},

author={Kevin Zakka and Qiayuan Liao and Brent Yi and Louis Le Lay and Koushil Sreenath and Pieter Abbeel},

year={2026},

eprint={2601.22074},

archivePrefix={arXiv},

primaryClass={cs.RO},

url={https://arxiv.org/abs/2601.22074},

}

License

mjlab is licensed under the Apache License, Version 2.0.

Third-Party Code

Some portions of mjlab are forked from external projects:

src/mjlab/utils/lab_api/— Utilities forked from NVIDIA Isaac Lab (BSD-3-Clause license, see file headers)

Forked components retain their original licenses. See file headers for details.

Acknowledgments

mjlab wouldn't exist without the excellent work of the Isaac Lab team, whose API design and abstractions mjlab builds upon.

Thanks to the MuJoCo Warp team — especially Erik Frey and Taylor Howell — for answering our questions, giving helpful feedback, and implementing features based on our requests countless times.