README.org

July 17, 2025 · View on GitHub

👉 [[https://github.com/sponsors/xenodium][Support this work via GitHub Sponsors]]

[[https://stable.melpa.org/#/chatgpt-shell][file:https://stable.melpa.org/packages/chatgpt-shell-badge.svg]] [[https://melpa.org/#/chatgpt-shell][file:https://melpa.org/packages/chatgpt-shell-badge.svg]]

- chatgpt-shell

A multi-llm Emacs [[https://www.gnu.org/software/emacs/manual/html_node/emacs/Shell-Prompts.html][comint]] shell, by [[https://lmno.lol/alvaro][me]].

- Related packages

- [[https://github.com/xenodium/ob-chatgpt-shell][ob-chatgpt-shell]]: Evaluate chatgpt-shell blocks as Emacs org babel blocks.

- [[https://github.com/xenodium/ob-dall-e-shell][ob-dall-e-shell]]: Evaluate DALL-E shell blocks as Emacs org babel blocks.

- [[https://github.com/xenodium/dall-e-shell][dall-e-shell]]: An Emacs shell for OpenAI's DALL-E.

- [[https://github.com/xenodium/shell-maker][shell-maker]]: Create Emacs shells backed by either local or cloud services.

- News

chatgpt-shell goes multi model 🎉

Please sponsor the project to make development + support sustainable.

| Provider | Model | Supported | Setup | |------------+---------------+-----------+----------------------------------| | Anthropic | Claude | Yes | Set =chatgpt-shell-anthropic-key= | | Deepseek | Chat/Reasoner | Yes | Set =chatgpt-shell-deepseek-key= | | Google | Gemini | Yes | Set =chatgpt-shell-google-key= | | Kagi | Summarizer | Yes | Set =chatgpt-shell-kagi-key= | | Ollama | Llama | Yes | Install [[https://ollama.com/][Ollama]] | | OpenAI | ChatGPT | Yes | Set =chatgpt-shell-openai-key= | | OpenRouter | Various | Yes | Set =chatgpt-shell-openrouter-key= | | Perplexity | Llama Sonar | Yes | Set =chatgpt-shell-perplexity-key= |

Note: With the exception of [[https://ollama.com/][Ollama]], you typically have to pay the cloud services for API access. Please check with each respective LLM service.

My favourite model is missing.

| [[https://github.com/xenodium/chatgpt-shell/issues][File a feature request]] | [[https://github.com/sponsors/xenodium][sponsor the work]] |

** A familiar shell

chatgpt-shell is a [[https://www.gnu.org/software/emacs/manual/html_node/emacs/Shell-Prompts.html][comint]] shell. Bring your favourite Emacs shell flows along.

#+HTML:

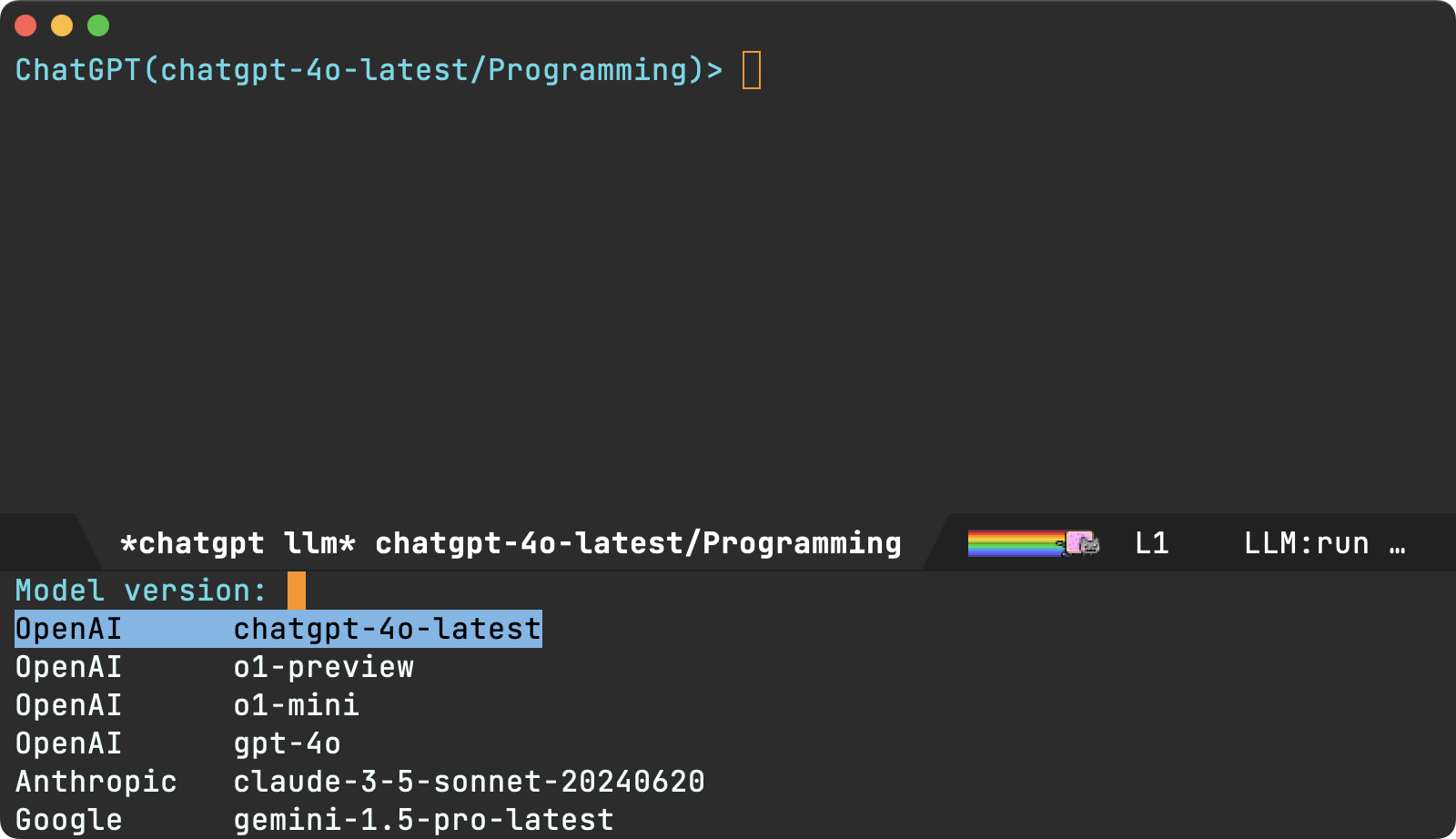

** Swap models

One shell to query all. Swap LLM provider (via =M-x chatgpt-shell-swap-model=) and continue with your familiar flow.

#+HTML:

** A shell hybrid

=chatgpt-shell= includes a compose buffer experience. This is my favourite and most frequently used mechanism to interact with LLMs.

For example, select a region and invoke =M-x chatgpt-shell-prompt-compose= (=C-c C-e= is my preferred binding), and an editable buffer automatically copies the region and enables crafting a more thorough query. When ready, submit with the familiar =C-c C-c= binding. The buffer automatically becomes read-only and enables single-character bindings.

#+HTML:

*** Navigation: n/p (or TAB/shift-TAB)

Navigate through source blocks (including previous submissions in history). Source blocks are automatically selected.

*** Reply: r

Reply with with follow-up requests using the =r= binding.

*** Give me more: m

Want to ask for more of the same data? Press =m= to request more of it. This is handy to follow up on any kind of list (suggestion, candidates, results, etc).

*** Quick quick: q

I'm a big fan of quickly disposing of Emacs buffers with the =q= binding. chatgpt-shell compose buffers are no exception.

*** Request entire snippets: e

LLM being lazy and returning partial code? Press =e= to request entire snippet.

*** Quick Actions with Transient Menu

For quick access to common actions, =chatgpt-shell= provides a transient menu, powered by the excellent [[https://github.com/magit/transient][transient]] package. Think of it as a temporary keymap overlay that pops up, shows you available commands with their keybindings, and disappears after you select one.

Invoke it with =C-c C-t= while inside a =chatgpt-shell= buffer.

The menu is organized into logical groups for easy navigation:

#+BEGIN_SRC text :exports code

┌──────────────────────────────────────────────────────────────────────────────┐

│ Shells Compose prompt via Inline edit │

│ b: Switch to shell buffer e: Dedicated buffer q: Quick │

│ N: Create new shell p: Minibuffer insert/ │

│ P: Minibuffer (include last edit │

│ kill) r: Send │

│ region │

│ R: Send & │

│ review │

│ region │

│ │

│ Session History Navigation │

│ m: Swap model h: Search n: Next item │

│ L: Reload models p: Previous │

│ y: Swap system prompt item │

│ TAB: Next │

│ source │

│ block │

│

(Note: The exact commands shown depend on context, like whether a region is active or if you are inside a =chatgpt-shell= buffer.)

If you prefer a global keybinding (available everywhere in Emacs), you can add something like this to your personal Emacs configuration:

#+begin_src emacs-lisp :lexical no ;; In your init.el or equivalent (global-set-key (kbd "C-c C-g") 'chatgpt-shell-transient) #+end_src

This menu helps with discovering available commands and executing them quickly without needing to remember every single keybinding or =M-x= command name.

** Confirm inline mods (via diffs)

Request inline modifications, with explicit confirmation before accepting.

#+HTML:

** Execute snippets (a la [[https://orgmode.org/worg/org-contrib/babel/intro.html][org babel]])

Both the shell and the compose buffers enable users to execute source blocks via =C-c C-c=, leveraging [[https://orgmode.org/worg/org-contrib/babel/intro.html][org babel]].

#+HTML:

** Vision experiments

I've been experimenting with image queries (currently ChatGPT only, please [[https://github.com/sponsors/xenodium][sponsor]] to help bring support for others).

Below is a handy integration to extract Japanese vocabulary. There's also a generic image descriptor available via =M-x chatgpt-shell-describe-image= that works on any Emacs image (via dired, image buffer, point on image, or selecting a desktop region).

#+HTML:

- Support this effort

If you're finding =chatgpt-shell= useful, help make the project sustainable and consider ✨[[https://github.com/sponsors/xenodium][sponsoring]]✨.

=chatgpt-shell= is in development. Please report issues or send [[https://github.com/xenodium/chatgpt-shell/pulls][pull requests]] for improvements.

- Like this package? Tell me about it 💙

Finding it useful? Like the package? I'd love to hear from you. Get in touch ([[https://indieweb.social/@xenodium][Mastodon]] / [[https://twitter.com/xenodium][Twitter]] / [[https://bsky.app/profile/xenodium.bsky.social][Bluesky]] / [[https://www.reddit.com/user/xenodium][Reddit]] / [[mailto:me__AT__xenodium.com][Email]]).

- Install

** MELPA

Via [[https://github.com/jwiegley/use-package][use-package]], you can install with =:ensure t=.

#+begin_src emacs-lisp :lexical no (use-package chatgpt-shell :ensure t :custom ((chatgpt-shell-openai-key (lambda () (auth-source-pass-get 'secret "openai-key"))))) #+end_src

** Straight

#+begin_src emacs-lisp :lexical no (use-package shell-maker :straight (:type git :host github :repo "xenodium/shell-maker"))

(use-package chatgpt-shell :straight (:type git :host github :repo "xenodium/chatgpt-shell" :files ("chatgpt-shell*.el")) :custom ((chatgpt-shell-openai-key (lambda () (auth-source-pass-get 'secret "openai-key"))))) #+end_src

-

Swap models ** M-x chatgpt-shell-swap-model #+HTML:

-

Set default model #+begin_src emacs-lisp :lexical no (setq chatgpt-shell-model-version "llama3.2") #+end_src

-

Set API Keys

You will first need to get an API key for each of the various public LLM endpoints you want to interact with.

| Service | Model(s) | Link: get an API Key |

|---|---|---|

| OpenAI | ChatGPT | [[https://platform.openai.com/account/api-keys][Get an API Key]] |

| Anthropic | Claude | [[https://console.anthropic.com/dashboard][Visit the Dashboard]] |

| Deepseek | Chat/Reasoner | [[https://platform.deepseek.com/api_keys][Get an API Key]] |

| Gemini | [[https://aistudio.google.com/app/apikey][Get an API Key]] | |

| Kagi | Summarizer | [[https://kagi.com/settings?p=api][Get an API Key]] |

| OpenRouter | Various | [[https://openrouter.ai/settings/keys][Manage your API keys]] |

| Perplexity | Llama Sonar | [[https://docs.perplexity.ai/guides/getting-started#generate-an-api-key][Get an API Key]] |

** Provide the API Key to ChatGPT via a function

You can define a function that chatgpt-shell invokes to get the API Key. The following example is for Open AI; use a similar approach for other services.

#+begin_src emacs-lisp ;; if you are using the "pass" password manager (setq chatgpt-shell-openai-key (lambda () ;; (auth-source-pass-get 'secret "openai-key") ; alternative using pass support in auth-sources (nth 0 (process-lines "pass" "show" "openai-key"))))

;; or if using auth-sources, e.g., so the file ~/.authinfo has this line: ;; machine api.openai.com password OPENAI_KEY (setq chatgpt-shell-openai-key (auth-source-pick-first-password :host "api.openai.com"))

;; or same as previous but lazy loaded (prevents unexpected passphrase prompt) (setq chatgpt-shell-openai-key (lambda () (auth-source-pick-first-password :host "api.openai.com"))) #+end_src

** Set the appropriate variable Manually/Interactively

=M-x set-variable chatgpt-shell-anthropic-key=

=M-x set-variable chatgpt-shell-deepseek-key=

=M-x set-variable chatgpt-shell-google-key=

...

** Set the appropriate variable programatically, in your emacs init file #+begin_src emacs-lisp ;; set anthropic key from a string (setq chatgpt-shell-anthropic-key "my anthropic key") ;; set OpenAI key from the environment (setq chatgpt-shell-openai-key (getenv "OPENAI_API_KEY"))

#+end_src

- ChatGPT through proxy service

If you use ChatGPT through proxy service "https://api.chatgpt.domain.com", set options like the following:

#+begin_src emacs-lisp :lexical no (use-package chatgpt-shell :ensure t :custom ((chatgpt-shell-api-url-base "https://api.chatgpt.domain.com") (chatgpt-shell-openai-key (lambda () ;; Here the openai-key should be the proxy service key. (auth-source-pass-get 'secret "openai-key"))))) #+end_src

If your proxy service API path is not OpenAI ChatGPT default path like "=/v1/chat/completions=", then

you can customize option chatgpt-shell-api-url-path.

- Using ChatGPT through HTTP(S) proxy

Behind the scenes chatgpt-shell uses =curl= to send requests to the openai server. If you use ChatGPT through a HTTP proxy (for example you are in a corporate network and a HTTP proxy shields the corporate network from the internet), you need to tell =curl= to use the proxy via the curl option =-x http://your_proxy=. For this, use =chatgpt-shell-proxy=.

For example, if you want curl =-x= and =http://your_proxy=, set =chatgpt-shell-proxy= to "=http://your_proxy=".

- Launch

Launch with =M-x chatgpt-shell=.

Note: =M-x chatgpt-shell= keeps a single shell around, refocusing if needed. To launch multiple shells, use =C-u M-x chatgpt-shell=.

- Clear buffer

Type =clear= as a prompt.

#+begin_src sh ChatGPT> clear #+end_src

Alternatively, use either =M-x chatgpt-shell-clear-buffer= or =M-x comint-clear-buffer=.

- Saving and restoring

Save with =M-x chatgpt-shell-save-session-transcript= and restore with =M-x chatgpt-shell-restore-session-from-transcript=.

Some related values stored in =shell-maker= like =shell-maker-transcript-default-path= and =shell-maker-forget-file-after-clear=.

- Streaming

=chatgpt-shell= can either wait until the entire response is received before displaying, or it can progressively display as chunks arrive (streaming).

Streaming is enabled by default. =(setq chatgpt-shell-streaming nil)= to disable it.

- chatgpt-shell customizations

#+BEGIN_SRC emacs-lisp :results table :colnames '("Custom variable" "Description") :exports results (let ((rows)) (mapatoms (lambda (symbol) (when (and (string-match "^chatgpt-shell" (symbol-name symbol)) (custom-variable-p symbol)) (push `(,symbol ,(car (split-string (or (documentation-property symbol 'variable-documentation) (get (indirect-variable symbol) 'variable-documentation) (get symbol 'variable-documentation) "") "\n"))) rows)))) rows) #+END_SRC

#+RESULTS: | Custom variable | Description | |------------------------------------------------------------------+------------------------------------------------------------------------------| | chatgpt-shell-google-api-url-base | Google API’s base URL. | | chatgpt-shell-deepseek-api-url-base | DeepSeek API’s base URL. | | chatgpt-shell-perplexity-key | Perplexity API key as a string or a function that loads and returns it. | | chatgpt-shell-anthropic-thinking | When non-nil enable model thinking if available. | | chatgpt-shell-deepseek-key | DeepSeek key as a string or a function that loads and returns it. | | chatgpt-shell-prompt-header-write-git-commit | Prompt header of ‘git-commit‘. | | chatgpt-shell-highlight-blocks | Whether or not to highlight source blocks. | | chatgpt-shell-prompt-compose-display-action | Choose how to display the compose buffer. | | chatgpt-shell-display-function | Function to display the shell. Set to ‘display-buffer’ or custom function. | | chatgpt-shell-prompt-header-generate-unit-test | Prompt header of ‘generate-unit-test‘. | | chatgpt-shell-prompt-header-refactor-code | Prompt header of ‘refactor-code‘. | | chatgpt-shell-prompt-header-proofread-region | Prompt header used by ‘chatgpt-shell-proofread-region‘. | | chatgpt-shell-openai-reasoning-effort | The amount of reasoning effort to use for OpenAI reasoning models. | | chatgpt-shell-welcome-function | Function returning welcome message or nil for no message. | | chatgpt-shell-perplexity-api-url-base | Perplexity API’s base URL. | | chatgpt-shell-prompt-query-response-style | Determines the prompt style when invoking from other buffers. | | chatgpt-shell-model-version | The active model version as either a string. | | chatgpt-shell-kagi-key | Kagi API key as a string or a function that loads and returns it. | | chatgpt-shell-logging | Logging disabled by default (slows things down). | | chatgpt-shell-render-latex | Whether or not to render LaTeX blocks (experimental). | | chatgpt-shell-swap-model-selector | Custom function to select a model during swap. | | chatgpt-shell-api-url-base | OpenAI API’s base URL. | | chatgpt-shell-google-key | Google API key as a string or a function that loads and returns it. | | chatgpt-shell-ollama-api-url-base | Ollama API’s base URL. | | chatgpt-shell-openrouter-key | OpenRouter key as a string or a function that loads and returns it. | | chatgpt-shell-babel-headers | Additional headers to make babel blocks work. | | chatgpt-shell--pretty-smerge-mode-hook | Hook run after entering or leaving ‘chatgpt-shell--pretty-smerge-mode’. | | chatgpt-shell-include-local-file-link-content | Non-nil includes linked file content in requests. | | chatgpt-shell-compose-auto-transient | When non-nil automatically display transient menu post compose submission. | | chatgpt-shell-source-block-actions | Block actions for known languages. | | chatgpt-shell-default-prompts | List of default prompts to choose from. | | chatgpt-shell-anthropic-key | Anthropic API key as a string or a function that loads and returns it. | | chatgpt-shell-always-create-new | Non-nil creates a new shell buffer every time ‘chatgpt-shell’ is invoked. | | chatgpt-shell-screenshot-command | The program to use for capturing screenshots. | | chatgpt-shell-prompt-header-eshell-summarize-last-command-output | Prompt header of ‘eshell-summarize-last-command-output‘. | | chatgpt-shell-system-prompt | The system prompt ‘chatgpt-shell-system-prompts’ index. | | chatgpt-shell-transmitted-context-length | Controls the amount of context provided to chatGPT. | | chatgpt-shell-root-path | Root path location to store internal shell files. | | chatgpt-shell-prompt-header-whats-wrong-with-last-command | Prompt header of ‘whats-wrong-with-last-command‘. | | chatgpt-shell-read-string-function | Function to read strings from user. | | chatgpt-shell-swap-model-filter | Filter models to swap from using this function as a filter. | | chatgpt-shell-after-command-functions | Abnormal hook (i.e. with parameters) invoked after each command. | | chatgpt-shell-system-prompts | List of system prompts to choose from. | | chatgpt-shell-openai-key | OpenAI key as a string or a function that loads and returns it. | | chatgpt-shell-proxy | When non-nil, use as a proxy (for example http or socks5). | | chatgpt-shell-prompt-header-describe-code | Prompt header of ‘describe-code‘. | | chatgpt-shell-insert-dividers | Whether or not to display a divider between requests and responses. | | chatgpt-shell-models | The list of supported models to swap from. | | chatgpt-shell-openrouter-api-url-base | OpenRouter API’s base URL. | | chatgpt-shell-language-mapping | Maps external language names to Emacs names. | | chatgpt-shell-prompt-compose-view-mode-hook | Hook run after entering or leaving ‘chatgpt-shell-prompt-compose-view-mode’. | | chatgpt-shell-streaming | Whether or not to stream ChatGPT responses (show chunks as they arrive). | | chatgpt-shell-anthropic-api-url-base | Anthropic API’s base URL. | | chatgpt-shell-model-temperature | What sampling temperature to use, between 0 and 2, or nil. | | chatgpt-shell-anthropic-thinking-budget-tokens | The token budget allocated for Anthropic model thinking. | | chatgpt-shell-request-timeout | How long to wait for a request to time out in seconds. | | chatgpt-shell-kagi-api-url-base | Kagi API’s base URL. |

There are more. Browse via =M-x set-variable=

** =chatgpt-shell-display-function= (with custom function)

If you'd prefer your own custom display function,

#+begin_src emacs-lisp :lexical no (setq chatgpt-shell-display-function #'my/chatgpt-shell-frame)

(defun my/chatgpt-shell-frame (bname) (let ((cur-f (selected-frame)) (f (my/find-or-make-frame "chatgpt"))) (select-frame-by-name "chatgpt") (pop-to-buffer-same-window bname) (set-frame-position f (/ (display-pixel-width) 2) 0) (set-frame-height f (frame-height cur-f)) (set-frame-width f (frame-width cur-f) 1)))

(defun my/find-or-make-frame (fname) (condition-case nil (select-frame-by-name fname) (error (make-frame `((name . ,fname)))))) #+end_src

Thanks to [[https://github.com/tuhdo][tuhdo]] for the custom display function.

- chatgpt-shell commands #+BEGIN_SRC emacs-lisp :results table :colnames '("Binding" "Command" "Description") :exports results (let ((rows)) (mapatoms (lambda (symbol) (when (and (string-match "^chatgpt-shell" (symbol-name symbol)) (commandp symbol)) (push `(,(string-join (seq-filter (lambda (symbol) (not (string-match "menu" symbol))) (mapcar (lambda (keys) (key-description keys)) (or (where-is-internal (symbol-function symbol) comint-mode-map nil nil (command-remapping 'comint-next-input)) (where-is-internal symbol chatgpt-shell-mode-map nil nil (command-remapping symbol)) (where-is-internal (symbol-function symbol) chatgpt-shell-mode-map nil nil (command-remapping symbol))))) " or ") ,(symbol-name symbol) ,(car (split-string (or (documentation symbol t) "") "\n"))) rows)))) rows) #+END_SRC

#+RESULTS:

| Binding | Command | Description |

|----------------------+----------------------------------------------------------+---------------------------------------------------------------------------------|

| | chatgpt-shell-japanese-lookup | Look Japanese term up. |

| | chatgpt-shell-next-source-block | Move point to the next source block's body. |

| | chatgpt-shell-prompt-compose-request-entire-snippet | If the response code is incomplete, request the entire snippet. |

| | chatgpt-shell-prompt-compose-request-more | Request more data. This is useful if you already requested examples. |

| | chatgpt-shell-google-toggle-grounding-with-google-search | Toggle the :grounding-search' boolean for the currently-selected model. | | | chatgpt-shell-execute-babel-block-action-at-point | Execute block as org babel. | | C-c C-s | chatgpt-shell-swap-system-prompt | Swap system prompt from chatgpt-shell-system-prompts'. |

| | chatgpt-shell-system-prompts-menu | ChatGPT |

| | chatgpt-shell-prompt-compose-swap-model-version | Swap the compose buffer's model version. |

| | chatgpt-shell-describe-code | Describe code from region using ChatGPT. |

| C-chatgpt-shell-models'. | | C-x C-s | chatgpt-shell-save-session-transcript | Save shell transcript to file. | | | chatgpt-shell-proofread-region | Proofread text from region or current paragraph using ChatGPT. | | | chatgpt-shell-prompt-compose-quit-and-close-frame | Quit compose and close frame if it's the last window. | | | chatgpt-shell-prompt-compose-other-buffer | Jump to the shell buffer (compose's other buffer). | | | chatgpt-shell | Start a ChatGPT shell interactive command. | | RET | chatgpt-shell-submit | Submit current input. | | | chatgpt-shell-prompt-compose-swap-system-prompt | Swap the compose buffer's system prompt. | | | chatgpt-shell-describe-image | Request OpenAI to describe image. | | | chatgpt-shell-prompt-compose-search-history | Search prompt history, select, and insert to current compose buffer. | | | chatgpt-shell-prompt-compose-previous-history | Insert previous prompt from history into compose buffer. | | | chatgpt-shell-delete-interaction-at-point | Delete interaction (request and response) at point. | | | chatgpt-shell-anthropic-toggle-thinking | Toggle Anthropic model, as per chatgpt-shell-anthropic-thinking'. |

| | chatgpt-shell-refresh-rendering | Refresh markdown rendering by re-applying to entire buffer. |

| | chatgpt-shell-prompt-compose-insert-block-at-point | Insert block at point at last known location. |

| | chatgpt-shell-explain-code | Describe code from region using ChatGPT. |

| | chatgpt-shell-execute-block-action-at-point | Execute block at point. |

| | chatgpt-shell-load-awesome-prompts | Load chatgpt-shell-system-prompts' from awesome-chatgpt-prompts. | | | chatgpt-shell-write-git-commit | Write commit from region using ChatGPT. | | | chatgpt-shell-restore-session-from-transcript | Restore session from file transcript (or HISTORY). | | | chatgpt-shell-prompt-compose-next-interaction | Show next interaction (request / response). | | <backtab> or C-c C-p | chatgpt-shell-previous-item | Go to previous item. | | | chatgpt-shell-fix-error-at-point | Fixes flymake error at point. | | | chatgpt-shell-next-link | Move point to the next link. | | | chatgpt-shell-prompt-compose-transient | ChatGPT Shell Compose Transient. | | | chatgpt-shell-prompt-compose-clear-history | Clear compose and associated shell history. | | | chatgpt-shell-prompt-appending-kill-ring | Make a ChatGPT request from the minibuffer appending kill ring. | | | chatgpt-shell-ollama-load-models | Query ollama for the locally installed models and add them to | | C-<down> or M-n | chatgpt-shell-next-input | Cycle forwards through input history. | | | chatgpt-shell-prompt-compose-view-mode | Like view-mode, but extended for ChatGPT Compose. | | | chatgpt-shell-clear-buffer | Clear the current shell buffer. | | | chatgpt-shell-insert-local-file-link | Select and insert a link to a local file. | | | chatgpt-shell-edit-block-at-point | Execute block at point. | | <tab> or C-c C-n | chatgpt-shell-next-item | Go to next item. | | | chatgpt-shell-prompt-compose-send-buffer | Send compose buffer content to shell for processing. | | C-c C-e | chatgpt-shell-prompt-compose | Compose and send prompt from a dedicated buffer. | | | chatgpt-shell-rename-buffer | Rename current shell buffer. | | | chatgpt-shell-remove-block-overlays | Remove block overlays. Handy for renaming blocks. | | | chatgpt-shell-send-region | Send region to ChatGPT. | | | chatgpt-shell-send-and-review-region | Send region to ChatGPT, review before submitting. | | C-M-h | chatgpt-shell-mark-at-point-dwim | Mark source block if at point. Mark all output otherwise. | | | chatgpt-shell-insert-buffer-file-link | Select and insert a link to a buffer's local file. | | | chatgpt-shell--pretty-smerge-mode | Minor mode to display overlays for conflict markers. | | | chatgpt-shell-mark-block | Mark current block in compose buffer. | | | chatgpt-shell-prompt-compose-reply | Reply as a follow-up and compose another query. | | | chatgpt-shell-prompt-compose-refresh | Refresh compose buffer content with current item from shell. | | | chatgpt-shell-set-as-primary-shell | Set as primary shell when there are multiple sessions. | | | chatgpt-shell-google-load-models | Query Google for the list of Gemini LLM models available. | | | chatgpt-shell-rename-block-at-point | Rename block at point (perhaps a different language). | | | chatgpt-shell-quick-insert | Request from minibuffer and insert response into current buffer. | | | chatgpt-shell-reload-default-models | Reload all available models. | | S-<return> | chatgpt-shell-newline | Insert a newline, and move to left margin of the new line. | | | chatgpt-shell-generate-unit-test | Generate unit-test for the code from region using ChatGPT. | | | chatgpt-shell-prompt-compose-view-last | Display the last request/response interaction. | | | chatgpt-shell-prompt-compose-previous-item | Jump to and select previous item (request, response, block, link, interaction). | | | chatgpt-shell-prompt-compose-next-history | Insert next prompt from history into compose buffer. | | C-c C-c | chatgpt-shell-ctrl-c-ctrl-c | If point in source block, execute it. Otherwise interrupt. | | | chatgpt-shell-eshell-summarize-last-command-output | Ask ChatGPT to summarize the last command output. | | M-r | chatgpt-shell-search-history | Search previous input history. | | | chatgpt-shell-mode | Major mode for ChatGPT shell. | | | chatgpt-shell-prompt-compose-mode | Major mode for composing ChatGPT prompts from a dedicated buffer. | | | chatgpt-shell-previous-source-block | Move point to the previous source block's body. | | | chatgpt-shell-prompt | Make a ChatGPT request from the minibuffer. | | | chatgpt-shell-japanese-ocr-lookup | Select a region of the screen to OCR and look up in Japanese. | | | chatgpt-shell-refactor-code | Refactor code from region using ChatGPT. | | | chatgpt-shell-proofread-paragraph-or-region | Proofread text from region or current paragraph using ChatGPT. | | | chatgpt-shell-view-block-at-point | View code block at point (using language's major mode). | | | chatgpt-shell-japanese-audio-lookup | Transcribe audio at current file (buffer or dired') and look up in Japanese. |

| | chatgpt-shell-eshell-whats-wrong-with-last-command | Ask ChatGPT what's wrong with the last eshell command. |

| | chatgpt-shell-prompt-compose-cancel | Cancel and close compose buffer. |

| | chatgpt-shell-prompt-compose-retry | Retry sending request to shell. |

| | chatgpt-shell-version | Show chatgpt-shell' mode version. | | | chatgpt-shell-prompt-compose-previous-interaction | Show previous interaction (request / response). | | | chatgpt-shell-interrupt | Interrupt chatgpt-shell' from any buffer. |

| | chatgpt-shell-view-at-point | View prompt and output at point in a separate buffer. |

Browse all available via =M-x=.

- Feature requests

- Please go through this README to see if the feature is already supported.

- Need custom behaviour? Check out existing [[https://github.com/xenodium/chatgpt-shell/issues?q=is%3Aissue+][issues/feature requests]]. You may find solutions in discussions.

-

Pull requests Pull requests are super welcome. Please [[https://github.com/xenodium/chatgpt-shell/issues/new][reach out]] before getting started to make sure we're not duplicating effort. Also [[https://github.com/xenodium/chatgpt-shell/][search existing discussions]].

-

Reporting bugs ** Setup isn't working? Please share the entire snippet you've used to set =chatgpt-shell= up (but redact your key). Share any errors you encountered. Read on for sharing additional details. ** Found runtime/elisp errors? Please enable =M-x toggle-debug-on-error=, reproduce the error, and share the stack trace. ** Found unexpected behaviour? Please enable logging =(setq chatgpt-shell-logging t)= and share the content of the =chatgpt-log= buffer in the bug report. ** Babel issues? Please also share the entire org snippet.

-

Support my work

👉 Find my work useful? [[https://github.com/sponsors/xenodium][Support this work via GitHub Sponsors]] or [[https://apps.apple.com/us/developer/xenodium-ltd/id304568690][buy my iOS apps]].

- My other utilities, packages, apps, writing...

- [[https://xenodium.com/][Blog (xenodium.com)]]

- [[https://lmno.lol/alvaro][Blog (lmno.lol/alvaro)]]

- [[https://plainorg.com][Plain Org]] (iOS)

- [[https://flathabits.com][Flat Habits]] (iOS)

- [[https://apps.apple.com/us/app/scratch/id1671420139][Scratch]] (iOS)

- [[https://github.com/xenodium/macosrec][macosrec]] (macOS)

- [[https://apps.apple.com/us/app/fresh-eyes/id6480411697?mt=12][Fresh Eyes]] (macOS)

- [[https://github.com/xenodium/dwim-shell-command][dwim-shell-command]] (Emacs)

- [[https://github.com/xenodium/company-org-block][company-org-block]] (Emacs)

- [[https://github.com/xenodium/org-block-capf][org-block-capf]] (Emacs)

- [[https://github.com/xenodium/ob-swiftui][ob-swiftui]] (Emacs)

- [[https://github.com/xenodium/chatgpt-shell][chatgpt-shell]] (Emacs)

- [[https://github.com/xenodium/ready-player][ready-player]] (Emacs)

- [[https://github.com/xenodium/sqlite-mode-extras][sqlite-mode-extras]]

- [[https://github.com/xenodium/ob-chatgpt-shell][ob-chatgpt-shell]] (Emacs)

- [[https://github.com/xenodium/dall-e-shell][dall-e-shell]] (Emacs)

- [[https://github.com/xenodium/ob-dall-e-shell][ob-dall-e-shell]] (Emacs)

- [[https://github.com/xenodium/shell-maker][shell-maker]] (Emacs)

- Contributors

#+HTML:

#+HTML:

#+HTML:

Made with [[https://contrib.rocks][contrib.rocks]].